Open vSwitch (openvswitch, OVS) is an alternative to Linux native bridges, bonds, and vlan interfaces. Open vSwitch supports most of the features you would find on a physical switch, providing some advanced features like RSTP support, VXLANs, OpenFlow, and supports multiple vlans on a single bridge.If you need these features, it makes sense to switch to Open vSwitch.

A few weeks ago, I installed Sophos XG v18-MR4 on an older AMD A6-7400K based PC (2 cores @ 3.5 GHz). It has 4 GB of RAM, a Samsung SSD and an Intel 82571-based network card. I can get line speed (100/30) with all the bells and whistles (including SSL decryption) on different speed test sites and also torrents. Sophos XG was giving good throughout but with Proxmox, pfsense throughput dropped quite badly. I have gone through Proxmox and Netgate suggested settings and turned off all Hardware offloading. It has fixed the upload but still have slow internet speed.

- 2Configuration

- 2.1Overview

- 2.2Examples

Have a look at Proxmox - debian based, qemu virtualisation, virtio driver support, nice pretty web GUI etc. Ive used Sophos UTM9 and XG, currently using XG. XG Firewall identifies hidden risks and threats that other firewalls miss and automatically isolates active threats - something no other firewall can do. Blocks unknown threats with a comprehensive suite of advanced protection including IPS, ATP, Sandboxing, Dual AV, Web and App Control, Anti-phishing, a full-featured Web Application Firewall.

Installation

Update the package index and then install the Open vSwitch packages by executing:

Configuration

Official reference here, though a bit bare: https://github.com/openvswitch/ovs/blob/master/debian/openvswitch-switch.README.Debian

Overview

Open vSwitch and Linux bonding and bridging or vlans MUST NOT be mixed. For instance, do not attempt to add a vlan to an OVS Bond, or add a Linux Bond to an OVSBridge or vice-versa. Open vSwitch is specifically tailored to function within virtualized environments, there is no reason to use the native linux functionality.

Bridges

A bridge is another term for a Switch. It directs traffic to the appropriate interface based on mac address. Open vSwitch bridges should contain raw ethernet devices, along with virtual interfaces such as OVSBonds or OVSIntPorts. These bridges can carry multiple vlans, and be broken out into 'internal ports' to be used as vlan interfaces on the host.

It should be noted that it is recommended that the bridge is bound to a trunk port with no untagged vlans; this means that your bridge itself will never have an ip address. If you need to work with untagged traffic coming into the bridge, it is recommended you tag it (assign it to a vlan) on the originating interface before entering the bridge (though you can assign an IP address on the bridge directly for that untagged data, it is not recommended). You can split out your tagged VLANs using virtual interfaces (OVSIntPort) if you need access to those vlans from your local host. Proxmox will assign the guest VMs a tap interface associated with a vlan, so you do NOT need a bridge per vlan (such as classic linux networking requires). You should think of your OVSBridge much like a physical hardware switch.

When configuring a bridge, in /etc/network/interfaces, prefix the bridge interface definition with allow-ovs $iface. For instance, a simple bridge containing a single interface would look like:

Remember, if you want to split out vlans with ips for use on the local host, you should use OVSIntPorts, see sections to follow.

However, any interfaces (Physical, OVSBonds, or OVSIntPorts) associated with a bridge should have their definitions prefixed with allow-$brname $iface, e.g. allow-vmbr0 bond0

NOTE: All interfaces must be listed under ovs_ports that are part of the bridge even if you have a port definition (e.g. OVSIntPort) that cross-references the bridge!!!

Bonds

Bonds are used to join multiple network interfaces together to act as single unit. Bonds must refer to raw ethernet devices (e.g. eth0, eth1).

When configuring a bond, it is recommended to use LACP (aka 802.3ad) for link aggregation. This requires switch support on the other end. A simple bond using eth0 and eth1 that will be part of the vmbr0 bridge might look like this.

NOTE: The interfaces that are part of a bond do not need to have their own configuration section.

VLANs Host Interfaces

In order for the host (e.g. proxmox host, not VMs themselves!) to utilize a vlan within the bridge, you must create OVSIntPorts. These split out a virtual interface in the specified vlan that you can assign an ip address to (or use DHCP). You need to set ovs_options tag=$VLAN to let OVS know what vlan the interface should be a part of. In the switch world, this is commonly referred to as an RVI (Routed Virtual Interface), or IRB (Integrated Routing and Bridging) interface.

IMPORTANT: These OVSIntPorts you create MUST also show up in the actual bridge definition under ovs_ports. If they do not, they will NOT be brought up even though you specified an ovs_bridge. You also need to prefix the definition with allow-$bridge $iface

Setting up this vlan port would look like this in /etc/network/interfaces:

Rapid Spanning Tree (RSTP)

Open vSwitch supports the Rapid Spanning Tree Protocol, but is disabled by default. Rapid Spanning Tree is a network protocol used to prevent loops in a bridged Ethernet local area network.

WARNING: The stock PVE 4.4 kernel panics, must use a 4.5 or higher kernel for stability. Also, the Intel i40e driver is known to not work, older generation Intel NICs that use ixgbe are fine, as are Mellanox adapters that use the mlx5 driver.

In order to configure a bridge for RSTP support, you must use an 'up' script as the 'ovs_options' and 'ovs_extras' options do not emit the proper commands. An example would be to add this to your 'vmbr0' interface configuration:

It may be wise to also set a 'post-up' script that sleeps for 10 or so seconds waiting on RSTP convergence before boot continues.

Other bridge options that may be set are:

- other_config:rstp-priority= Configures the root bridge priority, the lower the value the more likely to become the root bridge. It is recommended to set this to the maximum value of 0xFFFF to prevent Open vSwitch from becoming the root bridge. The default value is 0x8000

- other_config:rstp-forward-delay= The amount of time the bridge will sit in learning mode before entering a forwarding state. Range is 4-30, Default 15

- other_config:rstp-max-age= Range is 6-40, Default 20

You should also consider adding a cost value to all interfaces that are part of a bridge. You can do so in the ethX interface configuration:

Interface options that may be set via ovs_options are:

- other_config:rstp-path-cost= Default 2000 for 10GbE, 20000 for 1GbE

- other_config:rstp-port-admin-edge= Set to False if this is known to be connected to a switch running RSTP to prevent entering forwarding state if no BDPUs are detected

- other_config:rstp-port-auto-edge= Set to False if this is known to be connected to a switch running RSTP to prevent entering a forwarding state if no BDPUs are detected

- other_config:rstp-port-mcheck= Set to True if the other end is known to be using RSTP and not STP, will broadcast BDPUs immediately on link detection

You can look at the RSTP status for an interface via:

NOTE: Open vSwitch does not currently allow a bond to participate in RSTP.

Note on MTU

If you plan on using a MTU larger than the default of 1500, you need to mark any physical interfaces, bonds, and bridges with a larger MTU by adding an mtu setting to the definition such as mtu 9000 otherwise it will be disallowed.

Odd Note: Some newer Intel Gigabit NICs have a hardware limitation which means the maximum MTU they can support is 8996 (instead of 9000). If your interfaces aren't coming up and you are trying to use 9000, this is likely the reason and can be difficult to debug. Try setting all your MTUs to 8996 and see if it resolves your issues.

Examples

Example 1: Bridge + Internal Ports + Untagged traffic

The below example shows you how to create a bridge with one physical interface, with 2 vlan interfaces split out, and tagging untagged traffic coming in on eth0 to vlan 1.

This is a complete and working /etc/network/interfaces listing:

Example 2: Bond + Bridge + Internal Ports

The below example shows you a combination of all the above features. 2 NICs are bonded together and added to an OVS Bridge. 2 vlan interfaces are split out in order to provide the host access to vlans with different MTUs.

This is a complete and working /etc/network/interfaces listing:

Example 3: Bond + Bridge + Internal Ports + Untagged traffic + No LACP

The below example shows you a combination of all the above features. 2 NICs are bonded together and added to an OVS Bridge. This example imitates the default proxmox network configuration but using a bond instead of a single NIC and the bond will work without a managed switch which supports LACP.

This is a complete and working /etc/network/interfaces listing:

Example 4: Rapid Spanning Tree (RSTP) - 1Gbps uplink, 10Gbps interconnect

WARNING: The stock PVE 4.4 kernel panics, must use a 4.5 or higher kernel for stability. Also, the Intel i40e driver is known to not work, older generation Intel NICs that use ixgbe are fine, as are Mellanox adapters that use the mlx5 driver.

This example shows how you can use Rapid Spanning Tree (RSTP) to interconnect your ProxMox nodes inexpensively, and uplinking to your core switches for external traffic, all while maintaining a fully fault-tolerant interconnection scheme. This means VM<->VM access (or possibly Ceph<->Ceph) can operate at the speed of the network interfaces directly attached in a star or ring topology. In this example, we are using 10Gbps to interconnect our 3 nodes (direct-attach), and uplink to our core switches at 1Gbps. Spanning Tree configured with the right cost metrics will prevent loops and activate the optimal paths for traffic. Obviously we are using this topology because 10Gbps switch ports are very expensive so this is strictly a cost-savings manoeuvre. You could obviously use 40Gbps ports instead of 10Gbps ports, but the key thing is the interfaces used to interconnect the nodes are higher-speed than the interfaces used to connect to the core switches.

This assumes you are using Open vSwitch 2.5+, older versions did not support Rapid Spanning Tree, but only Spanning Tree which had some issues.

To better explain what we are accomplishing, look at this ascii-art representation below:

This is a complete and working /etc/network/interfaces listing:

On our Juniper core switches, we put in place this configuration:

Inspecting:

Multicast

Right now Open vSwitch doesn't do anything in regards to multicast. Typically where you might tell linux to enable the multicast querier on the bridge, you should instead set up your querier at your router or switch. Please refer to the Multicast_notes wiki for more information.

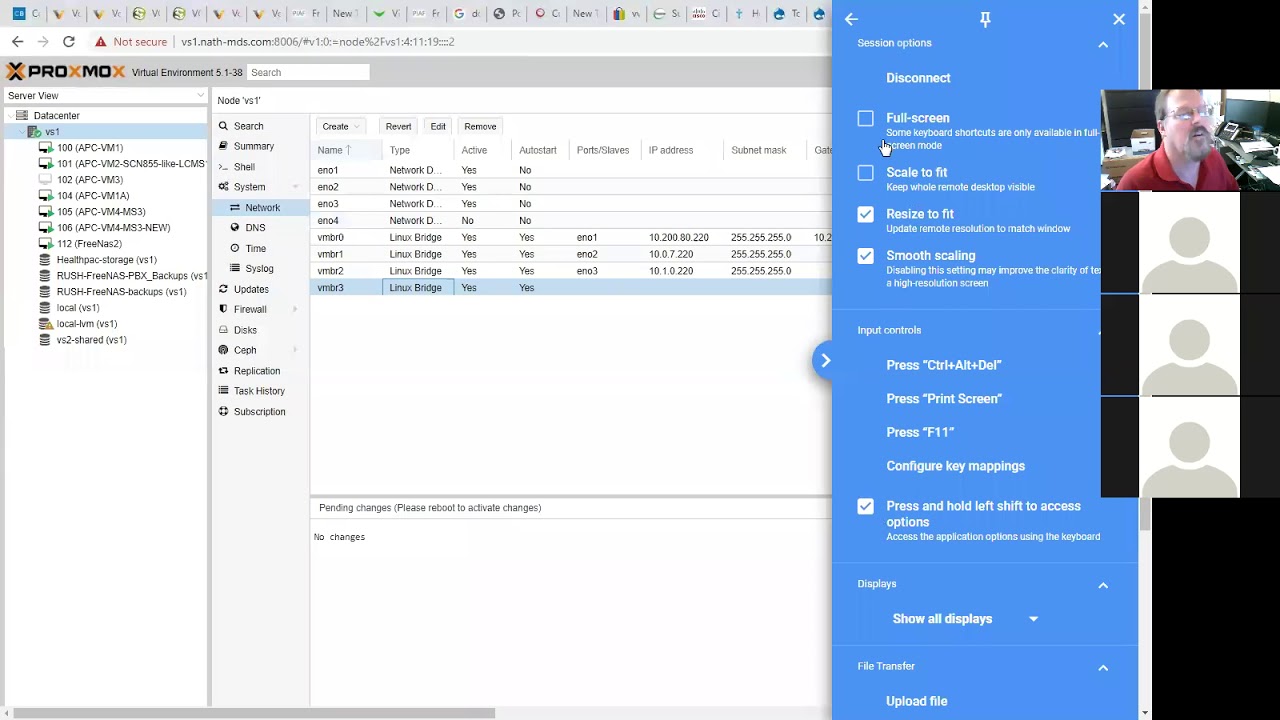

Using Open vSwitch in Proxmox

Using Open vSwitch isn't that much different than using normal linux bridges. The main difference is instead of having a bridge per vlan, you have a single bridge containing all your vlans. Then when configuring the network interface for the VM, you would select the bridge (probably the only bridge you have), and you would also enter the VLAN Tag associated with the VLAN you want your VM to be a part of. Now there is zero effort when adding or removing VLANs!

Open vSwitch (openvswitch, OVS) is an alternative to Linux native bridges, bonds, and vlan interfaces. Open vSwitch supports most of the features you would find on a physical switch, providing some advanced features like RSTP support, VXLANs, OpenFlow, and supports multiple vlans on a single bridge.If you need these features, it makes sense to switch to Open vSwitch.

- 2Configuration

- 2.1Overview

- 2.2Examples

Proxmox Sophos Xg

Installation

Update the package index and then install the Open vSwitch packages by executing:

Configuration

Official reference here, though a bit bare: https://github.com/openvswitch/ovs/blob/master/debian/openvswitch-switch.README.Debian

Overview

Open vSwitch and Linux bonding and bridging or vlans MUST NOT be mixed. For instance, do not attempt to add a vlan to an OVS Bond, or add a Linux Bond to an OVSBridge or vice-versa. Open vSwitch is specifically tailored to function within virtualized environments, there is no reason to use the native linux functionality.

Bridges

A bridge is another term for a Switch. It directs traffic to the appropriate interface based on mac address. Open vSwitch bridges should contain raw ethernet devices, along with virtual interfaces such as OVSBonds or OVSIntPorts. These bridges can carry multiple vlans, and be broken out into 'internal ports' to be used as vlan interfaces on the host.

It should be noted that it is recommended that the bridge is bound to a trunk port with no untagged vlans; this means that your bridge itself will never have an ip address. If you need to work with untagged traffic coming into the bridge, it is recommended you tag it (assign it to a vlan) on the originating interface before entering the bridge (though you can assign an IP address on the bridge directly for that untagged data, it is not recommended). You can split out your tagged VLANs using virtual interfaces (OVSIntPort) if you need access to those vlans from your local host. Proxmox will assign the guest VMs a tap interface associated with a vlan, so you do NOT need a bridge per vlan (such as classic linux networking requires). You should think of your OVSBridge much like a physical hardware switch.

When configuring a bridge, in /etc/network/interfaces, prefix the bridge interface definition with allow-ovs $iface. For instance, a simple bridge containing a single interface would look like:

Remember, if you want to split out vlans with ips for use on the local host, you should use OVSIntPorts, see sections to follow.

However, any interfaces (Physical, OVSBonds, or OVSIntPorts) associated with a bridge should have their definitions prefixed with allow-$brname $iface, e.g. allow-vmbr0 bond0

NOTE: All interfaces must be listed under ovs_ports that are part of the bridge even if you have a port definition (e.g. OVSIntPort) that cross-references the bridge!!!

Bonds

Bonds are used to join multiple network interfaces together to act as single unit. Bonds must refer to raw ethernet devices (e.g. eth0, eth1).

When configuring a bond, it is recommended to use LACP (aka 802.3ad) for link aggregation. This requires switch support on the other end. A simple bond using eth0 and eth1 that will be part of the vmbr0 bridge might look like this.

NOTE: The interfaces that are part of a bond do not need to have their own configuration section.

VLANs Host Interfaces

In order for the host (e.g. proxmox host, not VMs themselves!) to utilize a vlan within the bridge, you must create OVSIntPorts. These split out a virtual interface in the specified vlan that you can assign an ip address to (or use DHCP). You need to set ovs_options tag=$VLAN to let OVS know what vlan the interface should be a part of. In the switch world, this is commonly referred to as an RVI (Routed Virtual Interface), or IRB (Integrated Routing and Bridging) interface.

Proxmox Sophos Utm

IMPORTANT: These OVSIntPorts you create MUST also show up in the actual bridge definition under ovs_ports. If they do not, they will NOT be brought up even though you specified an ovs_bridge. You also need to prefix the definition with allow-$bridge $iface

Setting up this vlan port would look like this in /etc/network/interfaces:

Rapid Spanning Tree (RSTP)

Open vSwitch supports the Rapid Spanning Tree Protocol, but is disabled by default. Rapid Spanning Tree is a network protocol used to prevent loops in a bridged Ethernet local area network.

WARNING: The stock PVE 4.4 kernel panics, must use a 4.5 or higher kernel for stability. Also, the Intel i40e driver is known to not work, older generation Intel NICs that use ixgbe are fine, as are Mellanox adapters that use the mlx5 driver.

In order to configure a bridge for RSTP support, you must use an 'up' script as the 'ovs_options' and 'ovs_extras' options do not emit the proper commands. An example would be to add this to your 'vmbr0' interface configuration:

It may be wise to also set a 'post-up' script that sleeps for 10 or so seconds waiting on RSTP convergence before boot continues.

Other bridge options that may be set are:

- other_config:rstp-priority= Configures the root bridge priority, the lower the value the more likely to become the root bridge. It is recommended to set this to the maximum value of 0xFFFF to prevent Open vSwitch from becoming the root bridge. The default value is 0x8000

- other_config:rstp-forward-delay= The amount of time the bridge will sit in learning mode before entering a forwarding state. Range is 4-30, Default 15

- other_config:rstp-max-age= Range is 6-40, Default 20

You should also consider adding a cost value to all interfaces that are part of a bridge. You can do so in the ethX interface configuration:

Interface options that may be set via ovs_options are:

- other_config:rstp-path-cost= Default 2000 for 10GbE, 20000 for 1GbE

- other_config:rstp-port-admin-edge= Set to False if this is known to be connected to a switch running RSTP to prevent entering forwarding state if no BDPUs are detected

- other_config:rstp-port-auto-edge= Set to False if this is known to be connected to a switch running RSTP to prevent entering a forwarding state if no BDPUs are detected

- other_config:rstp-port-mcheck= Set to True if the other end is known to be using RSTP and not STP, will broadcast BDPUs immediately on link detection

You can look at the RSTP status for an interface via:

NOTE: Open vSwitch does not currently allow a bond to participate in RSTP.

Note on MTU

If you plan on using a MTU larger than the default of 1500, you need to mark any physical interfaces, bonds, and bridges with a larger MTU by adding an mtu setting to the definition such as mtu 9000 otherwise it will be disallowed.

Odd Note: Some newer Intel Gigabit NICs have a hardware limitation which means the maximum MTU they can support is 8996 (instead of 9000). If your interfaces aren't coming up and you are trying to use 9000, this is likely the reason and can be difficult to debug. Try setting all your MTUs to 8996 and see if it resolves your issues.

Examples

Example 1: Bridge + Internal Ports + Untagged traffic

The below example shows you how to create a bridge with one physical interface, with 2 vlan interfaces split out, and tagging untagged traffic coming in on eth0 to vlan 1.

This is a complete and working /etc/network/interfaces listing:

Example 2: Bond + Bridge + Internal Ports

The below example shows you a combination of all the above features. 2 NICs are bonded together and added to an OVS Bridge. 2 vlan interfaces are split out in order to provide the host access to vlans with different MTUs.

This is a complete and working /etc/network/interfaces listing:

Example 3: Bond + Bridge + Internal Ports + Untagged traffic + No LACP

The below example shows you a combination of all the above features. 2 NICs are bonded together and added to an OVS Bridge. This example imitates the default proxmox network configuration but using a bond instead of a single NIC and the bond will work without a managed switch which supports LACP.

This is a complete and working /etc/network/interfaces listing:

Example 4: Rapid Spanning Tree (RSTP) - 1Gbps uplink, 10Gbps interconnect

WARNING: The stock PVE 4.4 kernel panics, must use a 4.5 or higher kernel for stability. Also, the Intel i40e driver is known to not work, older generation Intel NICs that use ixgbe are fine, as are Mellanox adapters that use the mlx5 driver.

This example shows how you can use Rapid Spanning Tree (RSTP) to interconnect your ProxMox nodes inexpensively, and uplinking to your core switches for external traffic, all while maintaining a fully fault-tolerant interconnection scheme. This means VM<->VM access (or possibly Ceph<->Ceph) can operate at the speed of the network interfaces directly attached in a star or ring topology. In this example, we are using 10Gbps to interconnect our 3 nodes (direct-attach), and uplink to our core switches at 1Gbps. Spanning Tree configured with the right cost metrics will prevent loops and activate the optimal paths for traffic. Obviously we are using this topology because 10Gbps switch ports are very expensive so this is strictly a cost-savings manoeuvre. You could obviously use 40Gbps ports instead of 10Gbps ports, but the key thing is the interfaces used to interconnect the nodes are higher-speed than the interfaces used to connect to the core switches.

This assumes you are using Open vSwitch 2.5+, older versions did not support Rapid Spanning Tree, but only Spanning Tree which had some issues.

To better explain what we are accomplishing, look at this ascii-art representation below:

This is a complete and working /etc/network/interfaces listing:

On our Juniper core switches, we put in place this configuration:

Inspecting:

Multicast

Right now Open vSwitch doesn't do anything in regards to multicast. Typically where you might tell linux to enable the multicast querier on the bridge, you should instead set up your querier at your router or switch. Please refer to the Multicast_notes wiki for more information.

Using Open vSwitch in Proxmox

Using Open vSwitch isn't that much different than using normal linux bridges. The main difference is instead of having a bridge per vlan, you have a single bridge containing all your vlans. Then when configuring the network interface for the VM, you would select the bridge (probably the only bridge you have), and you would also enter the VLAN Tag associated with the VLAN you want your VM to be a part of. Now there is zero effort when adding or removing VLANs!